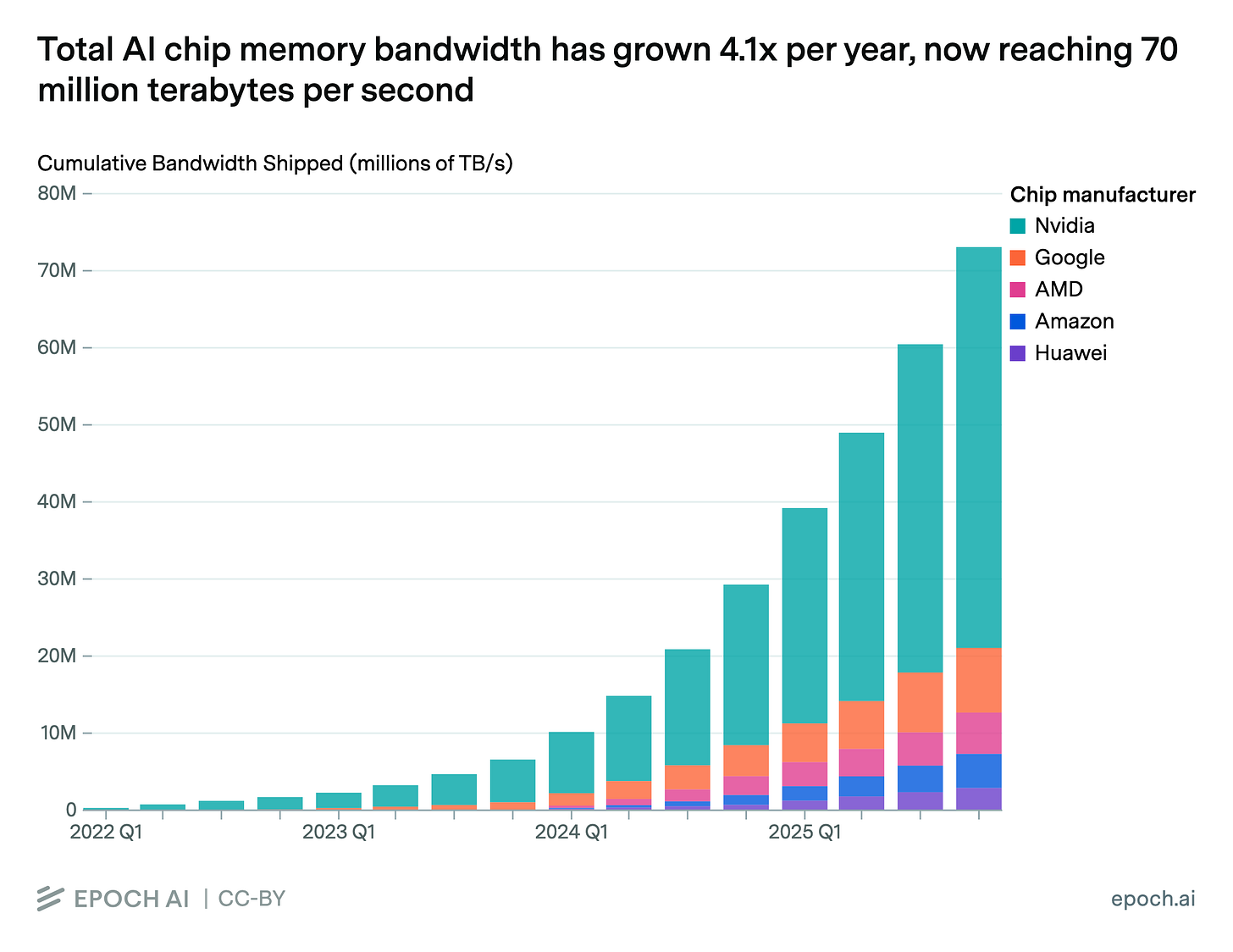

The total memory bandwidth of AI chips shipped since 2022 has reached 70 million terabytes per second, growing 4.1x per year. That’s around 300,000x more data per second than global internet traffic.

Why track this? AI inference is frequently bottlenecked on memory bandwidth, not compute. Aggregate HBM bandwidth is a rough proxy for the world’s total capacity to serve AI models.

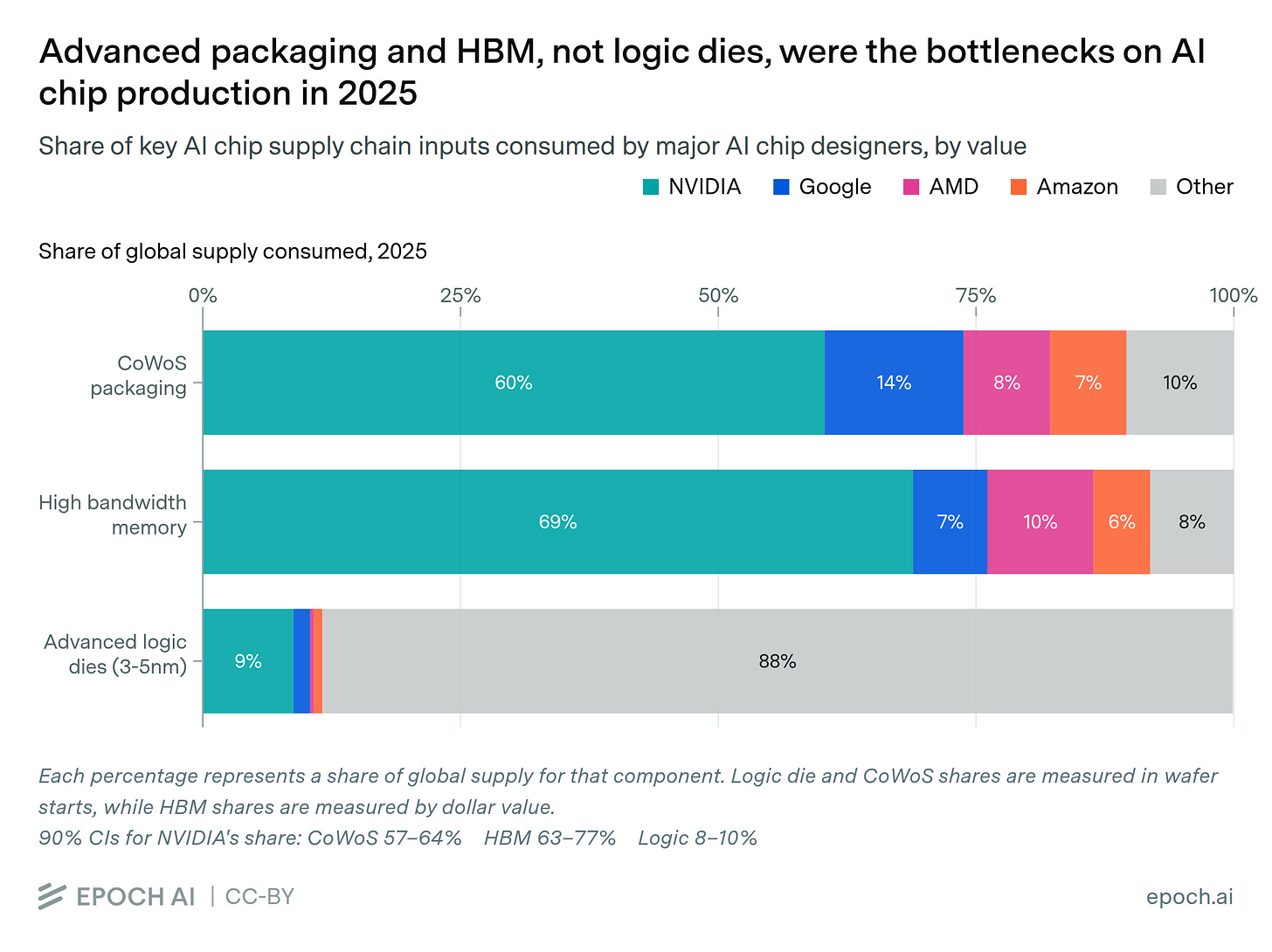

Demand for HBM has outpaced supply, causing prices to spike in early 2026. We recently found that AI chips consumed over 90% of total HBM production in 2025.

Check out our interactive data insight on our website!