FrontierMath Tier 4: Battle Royale

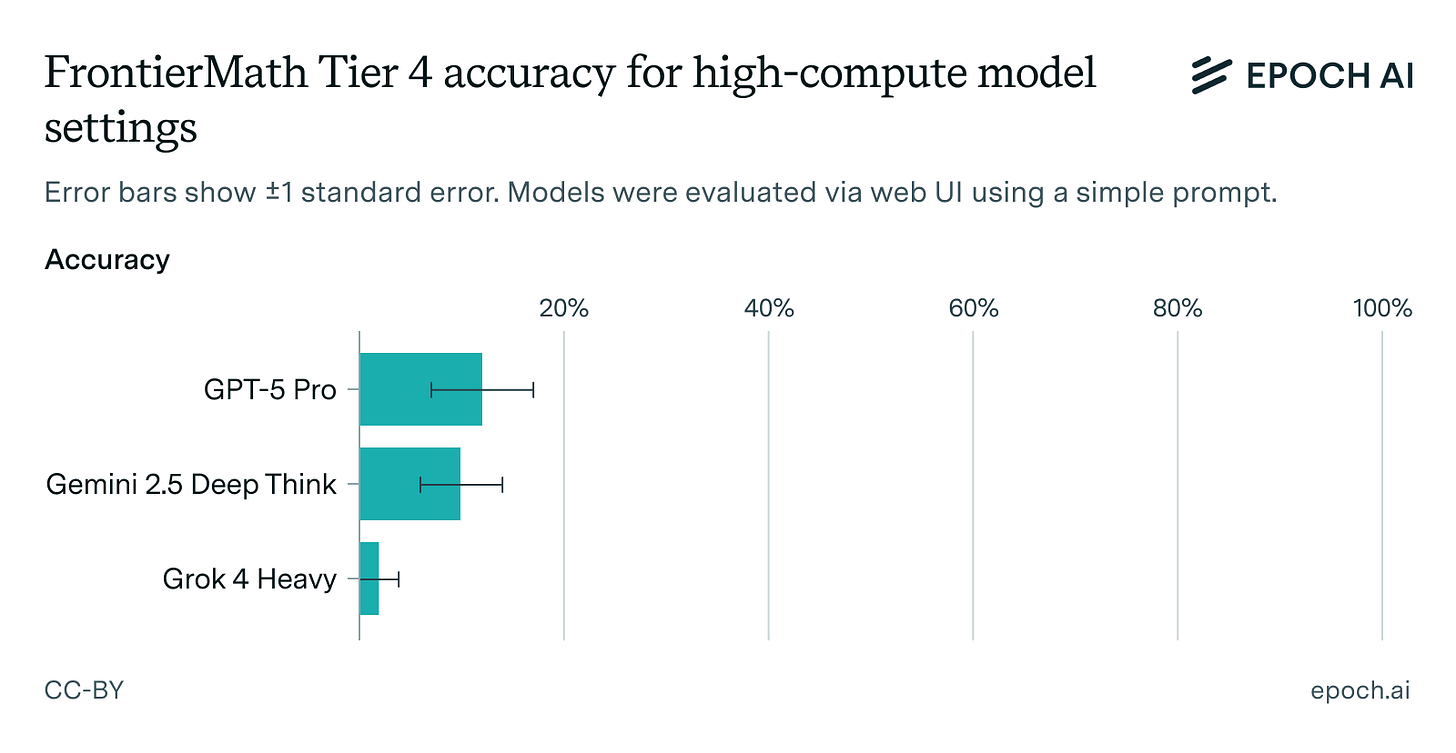

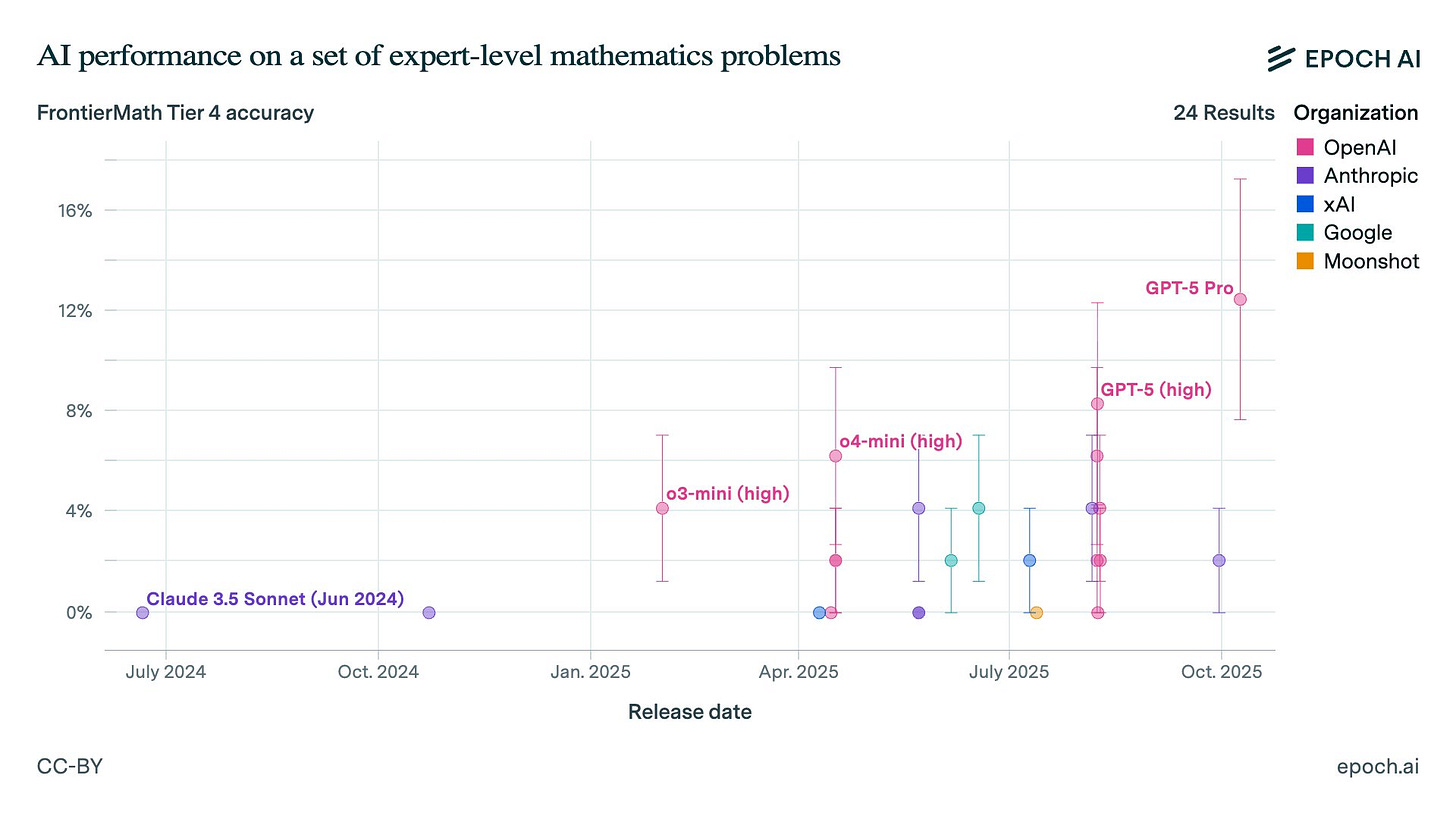

GPT-5 Pro set a new record (13%), edging out Gemini 2.5 Deep Think by a single problem (not statistically significant). Grok 4 Heavy lags.

We manually evaluated three compute-intensive model settings (GPT-5 Pro, Gemini 2.5 Deep Think, and Grok 4 Heavy) on our extremely hard math benchmark.

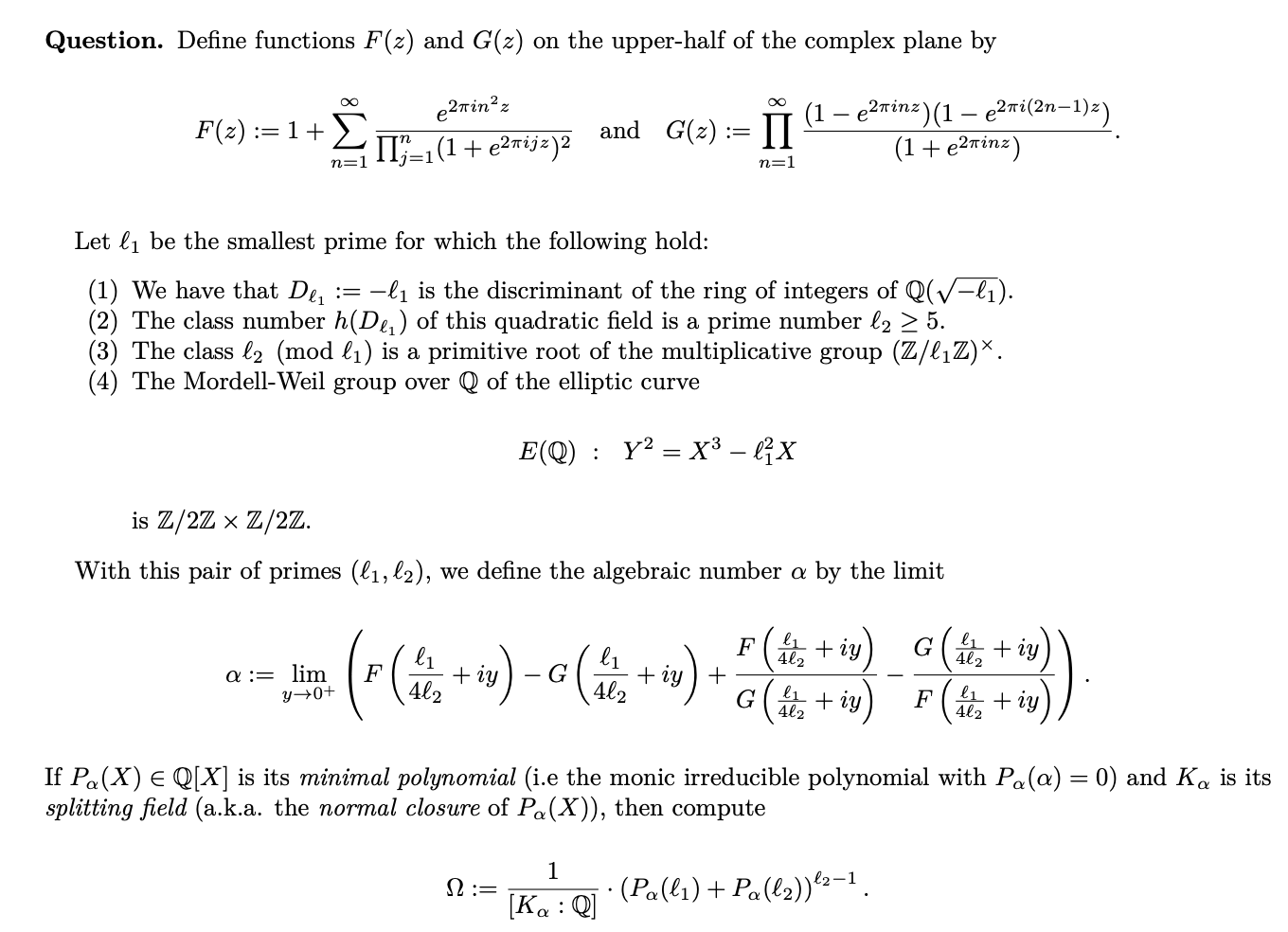

FrontierMath Tier 4 consists of 50 research-level math problems developed by professional mathematicians. These problems can take experts weeks to solve. Below is one of the two public samples. We evaluate on the other 48.

As of last week none of these models had an API. Instead, we used a simple prompt in the web apps and graded results manually. The models had web search and code execution tools. Web search is valid for FrontierMath: problems are not public and looking up math papers is allowed.

GPT-5 Pro now has an API, so we also evaluated it on our usual scaffold and put the result on our benchmarking hub. Here it solved six problems (13%): the same score as the web app run, but not all the same problems. Combined, GPT-5 Pro’s pass@2 is eight problems (17%).

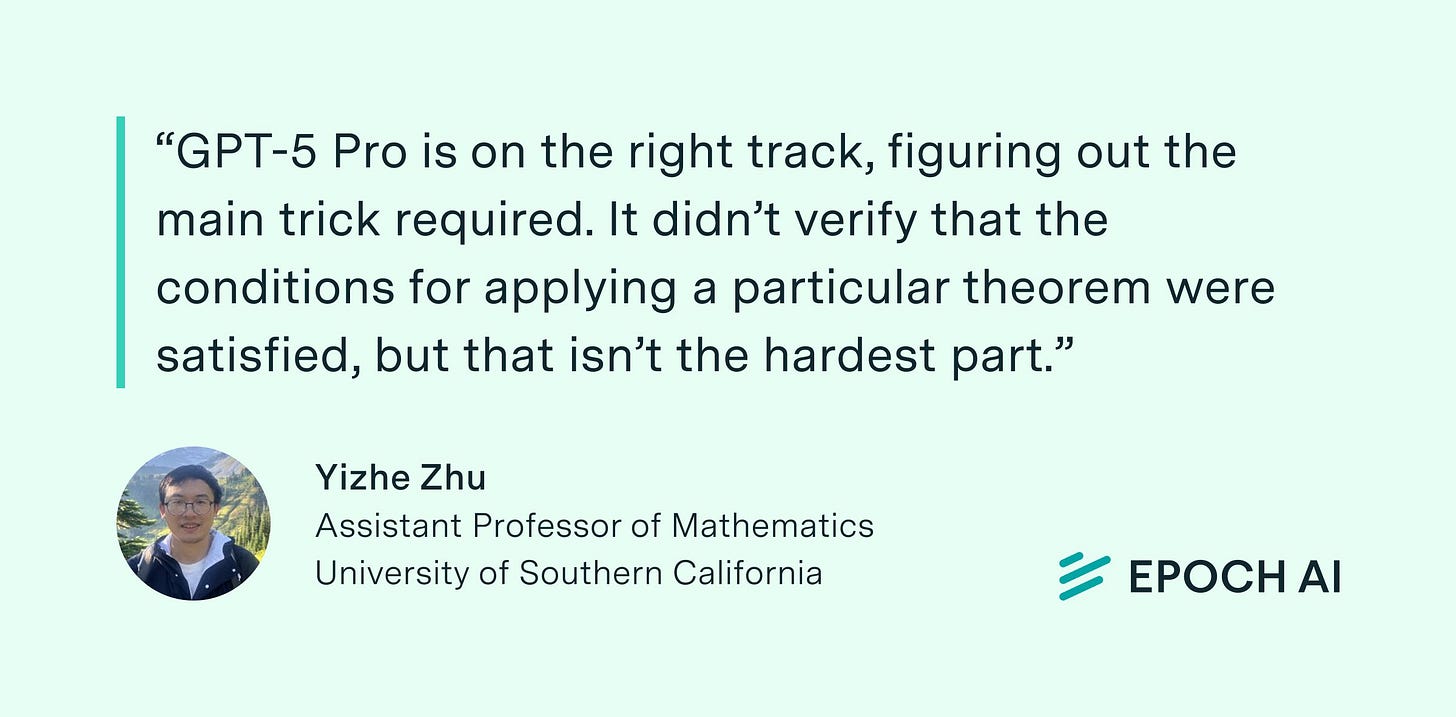

One problem solved in both of the GPT-5 Pro runs has not been solved by any other model. The problem author had this to say about it.

OpenAI, which funded FrontierMath, has access to 28/48 problems and solutions. Epoch holds out the remaining 20 problems and solutions. Of the eight problems solved at least once by GPT-5 Pro, five are in the held-out set.

Previously, eight Tier 4 problems had been solved at least once. These eight were all solved across these high-compute runs as well. Adding the one new problem solved by GPT-5 Pro brings the total number ever solved to nine, or 19% of the benchmark.

For more on FrontierMath, and more analysis of AI math capabilities, check out our website!